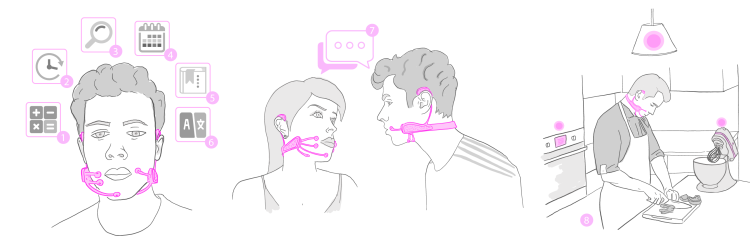

AlterEgo, A Wearable Device That Transmits Internal Speech Through Neuromuscular Facial Signals

Researchers at the MIT Media Lab have created the AlterEgo, a remarkable wearable headset that can read and transcribe a person’s internal speech through neuromuscular signals and bone conduction in the face and jaw. These signals are then fed into a machine learning system that can make repeated associations over time. The idea of the study was to seamlessly combine internal cognition with computer learning to create a hybrid extension of a second self .

AlterEgo is a closed-loop, non-invasive, wearable system that allows humans to converse in high-bandwidth natural language with machines, artificial intelligence assistants, services, and other people without any voice—without opening their mouth, and without any discernible movements—simply by vocalizing internally. The wearable captures electrical signals, induced by subtle but deliberate movements of internal speech articulators when a user intentionally vocalizes internally, in likeness to speaking to one’s self.

This amazing invention, while slightly overwhelming in theory, can perhaps eventually help those with diseases that affect a person’s ability to speak to communicate with the outside world.

..people who have disabilities where they can’t vocalize normally. For example, Roger Ebert did not have the ability to speak anymore because lost his jaw to cancer. Could he do this sort of silent speech and then have a synthesizer that would speak the words.

photos via MIT Media Lab